Are you playing a game you can (afford to) win?

At some point in my CISO career, I've advocated strongly for all of these controls:

🚧 We'll require our users to choose longer passwords, they're harder to crack!

🚧 We'll require our users to change their passwords every couple of months so an attacker who gets an old copy of the password database can't crack so many current hashes!

🚧 We'll restrict screenshots on mobile devices, there's data there!

🚧 We'll segment the network to limit north/south/east/west movement, so Department A can't get at Dept. B's sensitive data and vice-versa!

🚧 We will limit access to email and the web -- where risky content lives -- for users who fail enough simulated phishing campaigns!

I was increasing attacker friction, which was definitely my job! I genuinely believed I was doing good for my organization.

I was wrong.

What changed my mind was, in large part, watching each of these strategies fail to make our environment more breach-resistant. Segmentation did little to slow down a red team that was living off the land. People[1] were taking pictures of their phones with their other phones, plus they couldn't grasp why we would restrict the smallest possible form factor while allowing screenshots on devices with a much larger attack surface, literally.[2] Longer passwords were getting cracked routinely with cheap, rented GPUs[3] executing tens of billions of hash lookups per second because human nature is not to create, remember, and correctly type random[4] passwords -- the friction for bad actors remained low while forcing longer passwords without relenting on complexity requirements made friction for every one else unjustifiably high!

To wit:

Source:

Source: zxcvbn.

As far as passwords go, DolphinBoulevard2025[5] would be considered very strong[6] by every quantifiable measure of traditional InfoSec industry password guidance and visual strength meters...[7] except how much entropy it represents to an attacker using hashcat with a decent GPU. There was a point in my career[8] when I believed that gamifying the every-few-moons password composition ritual for our users was a winning strategy. Watching it fail[9] made me realize that I had been asking our users to play games that, over time, they couldn't possibly win.

Unwinnable games create unnecessary friction. Selecting, remembering, and correctly typing a long, complex password? An unwinnable game. Better to improve user experience and migrate to FIDO2 or, at minimum, biometrics, which provide both superior UX and security.[10]

Placing a server into the correct zone based on data type, lifecycle, future role-based access needs, etc., and expecting zoning to support microservice-oriented architectures where everything is an API? An unwinnable game. Better to double down on healthy architecture patterns and self-defending applications.

Spotting well-crafted phishing campaigns? An unwinnable game.[11] Better to improve the denominator and limit high-risk encounters... with dangerous content by more effectively dropping or quarantining it at the edge, and with social engineers by creating friction for their preferred tools, especially where those don't overlap with tools on the company formulary[12]. Plus, if we could keep our workforce productive (low good-actor friction) while reducing their run-ins with web and email channel attacks (high bad-actor friction), why couldn't we do that proactively and for everyone?

I had to re-learn what our industry had been teaching us for decades, i.e. to think of our control plane as a road where we controlled the placement of speed bumps, where more was always better. Instead, I started to see our control plane as a body of water: something that is smooth and liquid when approached at normal speeds[13], and that increases resistance the harder it's attacked, ultimately acting like a solid when a cinderblock is dropped from a sufficient height[14].

including me, for occasional troubleshooting assistance ↩︎

and the game was lost the moment I launched into a nuanced explanation of the BYOD risk ↩︎

or, if you insist, complete rigs purchased for ~$5001 in 2018 dollars ↩︎

this is the key here, where UX meets (and crashes headfirst into) entropy ↩︎

20 characters, upper / lower / numeric ↩︎

we still do this, in the voice to this day, absolute legend 🐐

↩︎

↩︎a fun exercise was to drop that exact password into security.org, passwordmonster.com, and bitwarden and seeing them return 500 quadrillion years, 12 days, and 1 month, respectively, as their prediction of time-to-crack ↩︎

as measured by red-team offline cracking success rate ↩︎

a good semantic trap to avoid here is the "you have only so many fingers you can lose" argument, the per-capita rate of breaches that begin with a compromised password vs. a compromised biometric is compelling ↩︎

"click here for an important update on this year's bonus plan funding" would get high double-digit click through rates in most companies ↩︎

low friction for good actors ↩︎

high friction for bad actors ↩︎

Optimizing for μ

Every control I implemented was liable to change the coefficient of friction for both good and bad actors. Long before I started thinking about user experience for good actors and how great UX bred great security outcomes[1] I began training myself to routinely consider what the cost of winning was for attackers. This wasn't about good-actor friction yet, and still I had a nagging feeling that the interventions I was advocating for looked like a fatally-flawed, ineffective "something must be done... here's something" response to our threat model. I knew I could do better.

I grew fascinated by the asymmetry of attacker and defender dynamics. How much did it cost for the attacker to win the game once? Was the cost different to win repeatedly (i.e. was it luck vs. skill[2])? How much would it have cost the defender to win once or repeatedly?

When ByBit was hacked a couple weeks ago, the headlines focused on the $1.4B (with a B!) loss suffered. At the heart of the affair was a high level of attacker sophistication[3] coupled with a high level of trust granted a third-party javascript module -- something defenders deal with daily, enough so that there's a low-friction defense built into most modern browsers for this: sub-resource integrity checking. Using this intervention would have created high friction for attackers[4] with relatively low cost[5] for the product team.

I started to apply this mode of thinking to a lot of the compromises I read about:

💸 What would it cost to subtly, maliciously alter Google Maps' lowest-latency-path navigation logic? Roughly the price of 99 used smartphones.

💸 What would it cost to develop an effective implant for a leading commercial router? About $60,000 in hardware plus years of engineering knowledge.

💸 Defeating common consumer-grade locks? There's a YouTube channel for that!

💸 Injecting a backdoor into engineer laptops at Google? $10[6]!

it turns out users who aren't constantly frustrated with the way the water in the control plane slows them down, they are far less tempted to come up with creative workarounds in the name of their own productivity, i.e. the thing their bonus is based on. ↩︎

See Annie Duke's Thinking in Bets for a great treatise on how to assign these characteristics) ↩︎

targeting engineers with access to Safe{Wallet}'s source code, finding a working exploit, obfuscating their code, etc., does not sound cheap! ↩︎

not going to go out on the prevented-the-breach limb altogether, these were determined adversaries with both high skill and high motivation ↩︎

periodically checking for version updates and deciding when to bump up to the latest... which itself has significant non-security benefits for stability of operations ↩︎

plus, of course, whatever it costs to become Michał Zalewski, which is... a LOT ↩︎

Putting it all together

These things feel fundamentally true[1] today about my CISO job:

- every control will eventually be defeated by an attacker, my job is to effectively increase attackers' cost-of-winning by increasing their friction (and, ideally, only their friction);

- every additional bit of attacker friction creates incremental friction for non-attackers, my job is to effectively minimize friction for all non-attackers;[2]

risk = f(hazard, outrage),[3] also "more things can happen than will"[4], my job is to effectively manage hazard and outrage as co-dependent factors that ultimately optimize for my company's business outcomes.[5]

If I wasn't going to ask our users to play games they cannot win, and if I wasn't going to fall into the "something must be done... therefore any 'something' we do is justified in the name of controls" trap... what, then, was a better way of thinking about our the roadmap for our control plane?

I like asking three questions when evaluating the net benefit of a current or new control:

❓How much does this control increase the attacker's cost of winning?

For example, the practice of periodic forced password changes was so ineffective at stopping attackers[6] that NIST recently deprecated a decades-long recommendation favoring periodic, forced password changes.

❓How much does this control reduce all other users' likelihood of creating value?

As chat interfaces to large language models became popular over the last couple of years, I surprised a lot of people by advocating for well-paced adoption with thoughtful guard rails and targeted, data-dependent technical controls as an alternative to carte blanche denial of access. When asked why I, as CISO, was taking an apparently contrarian position, my answer was, "an attacker wanting to steal data over the web channel is a generalized problem that we are actively defending, and if I can think of more reliable ways to conduct exfil, our potential attackers can think of ten times as many... in the meantime, having a user population that is intentionally behind on acquiring prompt engineering literacy is bad for our company, let's make it my job as CISO to make it safe for them to use a handful of reputable LLMs, let's enter into commercial agreements where we can lean on higher levels of technical controls, and let's not miss the opportunity to take or preserve first-mover advantage!"

❓What is the outrage factor across various options of administrative or technical controls, and does the proposed change in attacker/non-attacker friction leave the enterprise in better shape than it was prior?

Or... if something must be done about a particular threat, is this the right something, is the treatment better/worse for the organism as a whole than the disease? This may be the most nuanced of the three, as it reveals the challenge of information security as an asymmetric confrontation between attacker and enterprise. Simulated phishing campaigns are maybe best at training users to spot simulated phishing campaigns and have been shown to have marginal benefits[7] in preventing actual phishing campaigns. Still, the outrage factor for doing nothing to educate a multi-generational workforce on emerging threats is too high to abandon the practice of security awareness altogether.[8]

Generalized further, finding and keeping my is-it-worth-it line current and optimized is one of the things that defines my performance in my CISO role.

As a CISO... is your line closer to the purple? one of the grays? NOTA?[9]

As a CISO... is your line closer to the purple? one of the grays? NOTA?[9]

If you squint at the diagram above, you'll recognize a lot of the MITRE enterprise mitigations represented, with up-and-to-the-right↗️ approaching the friction of "water," i.e. increasing bad-actor cost-to-win while minimally impairing good-actor UX. These controls sit in a no-regrets zone of sorts, offering minimal negative friction for end-users while doing a lot to slow down attackers, especially compared to doing nothing.

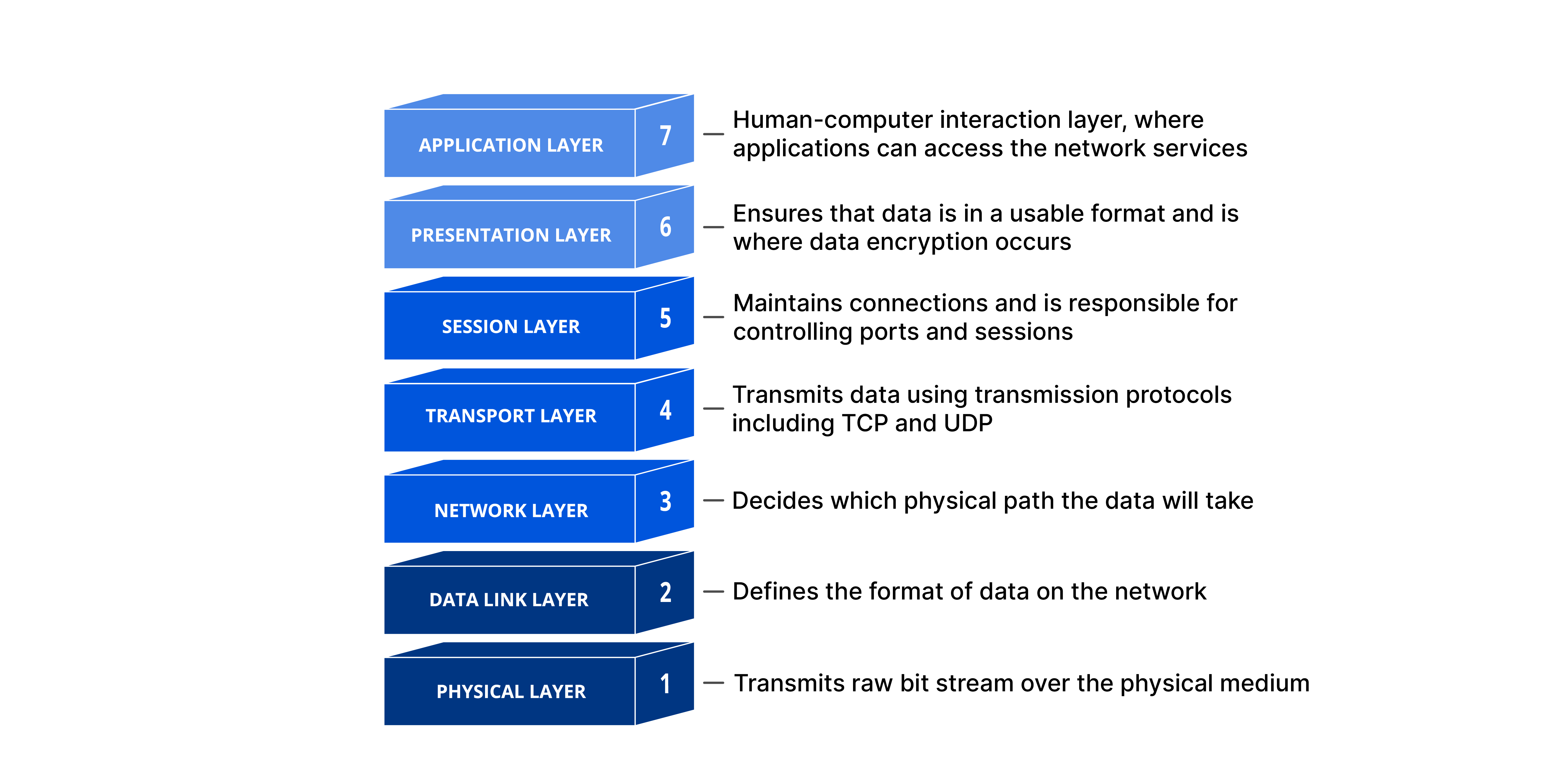

Towards the center, we start introducing some non-attacker friction and there are more trade-offs to consider... and many are still good investments net-net, especially considering the outrage factor of not having, say, an edge firewall dropping obviously unnecessary traffic. That feels true even if it's easy to argue that, in this day and age, the adoption of APIs as a transactional primitive have pushed HTTP and HTTPS/TLS from a layer-7 protocol[10] to something that looks a lot like it's delivering the basic capabilities of layer-4 — and an edge firewall with an allow https... deny all ruleset is functionally equivalent to an allow all.[11]

The trade-offs on control friction get increasingly interesting moving down-and-to-the-left↙️. An effective data loss prevention[12] program can be the difference between walking an insider threat out of the building and a seeking damages in a lawsuit[13] ... and firing a blocking alert[14] with aggressive matching can feel like death-by-a-thousand-cuts for an organization[15] that routinely handles sensitive information in the course of business-as-usual.

In a world where every control will eventually be defeated,[16] a good CISO trick is to slow down and ask the right questions.[17] The one I have been training myself to ask a lot is:

is the additional friction I'm creating for good actors justified by the disproportionately[18] higher friction I'm creating for bad ones?

Credits, etc.: All text AIL-0[19], all section header images AIL-3 generated in MidJourney, new sidenotes styling AIL-4[20]. Also, isn't that embedding-in-a-PNG feature of excalidraw nifty?[21]

the fact that it's three is not an intentional Hamilton reference ↩︎

One of my favorite examples of this is the story I heard from a CIO in financial services who was asked to green-light a massive improvement in fraud detection... It turns out that those was-that-REALLY-you texts that we get from our banks after our cards are used are v. good at deterring people using stolen cards! There was a big, untapped fraud reduction opportunity that merely involved sending those texts to (almost) everyone 😒 ↩︎

which include a whole bunch of positive outcomes regarding value creation and an obligation to manage risk of catastrophic adverse events ↩︎

who were already stopped from online brute-force cracking by intruder lockout mechanisms and for whom offline brute-force cracking an entire organization's

NTDIS.ditextract was an hours-long affair) ↩︎Ho, Mirian, et. al.

Taken together, our results suggest that anti-phishing training programs, in their current and commonly deployed forms, are unlikely to offer significant practical value in reducing phishing

risks. ↩︎

↩︎I appreciated Matt Linton's thoughtful take on what shifting from negative to positive reinforcement while maintaining high standards and measuring outcomes (I'm a fan of

median time until first X reports are receivedas a metric, as this is what my defender teams have told me is a good threshold for them to confidently begin investigating an attack as being targeted and high-risk) looks like. ↩︎a more advanced version of this would encode the cost of the control as the size of a bubble... ↩︎

of all the hills I won't die on, strict adherence to OSI is high on the list, just saying.

↩︎

↩︎🦆if it walks, talks, and quacks... ↩︎

or data theft detection if you're feeling extra 🌶️ ↩︎

see Rippling v. Deel or Waymo v. Levandowski, better known as Google v. Uber ↩︎

or a quarantine without release-on-your-own-recognizance ↩︎

if I could only get the Don LaFontaine treatment on this ↩︎

Rory Sutherland does a great bit on this that I won't try to imitate here. ↩︎

since there are so many more good actors than bad ones active in our environment at any given time ↩︎

Shout out to D. Miessler

↩︎

↩︎inspired by Molly White and gwern and implemented with significant assistance from claude.ai ↩︎

Watching this go from nifty-idea to shipping feature was so cool, hat tip to the excalidraw team and dwelle, absolute legends! ↩︎